Its Day 30 of my 100 Days of Cloud journey, and todays post continues my learning through the next 2 modules of my AWS Skillbuilder course on AWS Cloud Practitioner Essentials.

This is the official pre-requisite course on the AWS Skillbuilder platform (which for comparison is the AWS equivalent of Microsoft Learn) to prepare candidates for the AWS Certified Cloud Practitioner certification exam.

Let’s have a quick overview of what the 2 modules I completed today covered, the technologies discussed and key takeaways.

Module 5 – Storage and Databases

Storage

First thingcovered was the Storage types available with EC2 Instances:

- An instance store provides temporary block-level storage for an Amazon EC2 instance. An instance store is disk storage that is physically attached to the host computer for an EC2 instance, and therefore has the same lifespan as the instance. When the instance is terminated, you lose any data in the instance store.

- Amazon Elastic Block Store (Amazon EBS) is a service that provides block-level storage volumes that you can use with Amazon EC2 instances. If you stop or terminate an Amazon EC2 instance, all the data on the attached EBS volume remains available. It important to note that EBS stores data in a single Availability Zone, as it needs to be in the same zone as the EC2 instance it is attached to.

- An EBS snapshot is an incremental backup. This means that the first backup taken of a volume copies all the data. For subsequent backups, only the blocks of data that have changed since the most recent snapshot are saved.

Moving on from that, we looked at Amazon S3 (Simple Storage Service) which provides object (or File Level storage). Objects are stored in buckets (which are like folders or containers). You can upload any type of file to an S3 bucket, which has unlimited storage space. You can set permissions on any object you upload. S3 provides a number of classes of storage which can be used depending on how you plan to store your data and how frequently or infrequently you access it:

- S3 Standard: used for high availability, and stores data in a minimum of 3 Availability Zones.

- S3 Standard-IA: used for infrequently accessed data, still stores the data across 3 Availability Zones.

- S3 One Zone-IA: same as above, but only stores data in a single Availability Zone to keep costs low.

- S3 Intelligent-Tiering: used for data with unknown or changing access patterns.

- S3 Glacier: low-cost storage that is ideal for data archiving. Data can be retrieved within a few minutes

- S3 Glacier Deep Archive: lower cost than above, data is retrieved within 12 hours

Next up is Amazon Elastic File System (Amazon EFS), which is a scalable file storage system that is used with AWS Cloud and On-premise resources. You can attach EFS to a single or multiple EC2 instances. This is a Linux File System, and can have multiple instances reading and writing to it at the same time. Amazon EFS file systems store data across multiple Availability Zones.

Database Types

Now we’re into the different types of Databases that are available on AWS.

Amazon Relational Database Service (Amazon RDS) enables you to run relational databases such as MySQL, PostgreSQL, Oracle and Microsoft SQL Server. You can move on-premises SQL Servers to EC2 instances, or else move the Databases on these servers to Amazon RDS instances. You also have the option to move MySQL or PostgreSQL databases to Amazon Aurora, which cost 1/10th of the cost of RDS and replicates six copies of your data across 3 Availability Zones.

Amazon DynamoDB is a serverless NoSQL or non-relational key-value database that uses tables and attributes. It has specific use cases and is highly scalable.

Amazon Redshift is a data warehousing service used for big data analytics, and has the ability to collect data from multiple sources and analyse relationships and trends across your data.

On top of the above, there is the AWS Database Migration Services (AWS DMS) which can help you migrate existing databases to AWS. The Source database remains active during the migration, and the source and destination databases do not need to be the same type of database.

Module 6 – Security

Shared Responsibility Model

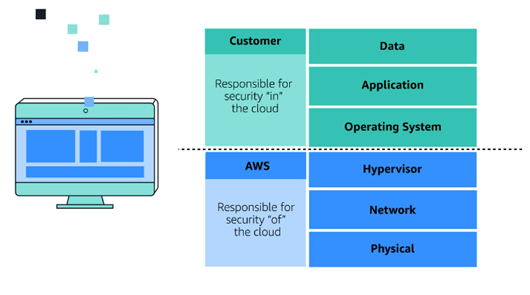

We kicked off the Security Module by looking at the Shared Responsibility Model. This will be familiar to any Cloud service, where AWS is reponsible for some parts f the environment, and the customer is responsibled for other parts.

The shared responsibility model divides into customer responsibilities (commonly referred to as “security in the cloud”) and AWS responsibilities (commonly referred to as “security of the cloud”).

Identity and Access Control

Onwards to Identity! AWS Identity and Access Management (IAM) allows you to manage AWS services and resources securely. You do this by using a combination of users/groups/roles, policies and MFA. When you first create an AWS account, you have an account called “root”. You can then use the “root” account to create an IAM user that you will use to perform everyday tasks. This is the same concept as any Linux system, you should not use the “root” account. You can then add the user to an IAM group.

You then create policies that allows or denies permissions to specific AWS resources and apply that policy to the user or group.

You then have the concept of roles – roles are identities that can be used to gain temporary access to perform a specific task. When a user assumes a role, they give up all previous permissions they had.

Finally, we should always enable MFA to provide an extra layer of security for your AWS account.

Managing Multiple AWS Accounts

But what if we have multiple AWS accounts that we need to manage? This where AWS Organisations comes into play. AWS Organizations can consolidate and manage multiple AWS accounts. This is useful if you have separate accounts for Production, Development and Testing. You can then group AWS accounts into Organizational Units (OUs). In AWS Organizations, you can apply service control policies (SCPs) to the organization root, an individual member account, or an OU. An SCP affects all IAM users, groups, and roles within an account, including the AWS account root user.

Compliance

Now we move on to Compliance. AWS Artifact is a service that provides on-demand access to AWS security and compliance reports and select online agreements. AWS Artifact consists of two main sections: AWS Artifact Agreements and AWS Artifact Reports:

- In AWS Artifact Agreements, you can review, accept, and manage agreements for an individual account and for all your accounts in AWS Organizations.

- AWS Artifact Reports provide compliance reports from third-party auditors.

Security

Finally, we reach the deeper level security and defence stuff!

AWS Shield provides built-in protection against DDoS Attached. AWS Shield provides 2 levels of protection:

- Standard – automatically protects all AWS customers from the most frequent types of DDoS attacks.

- Advanced – on top of Standard, provides detailed attack diagnostics and the ability to detect and mitigate sophisticated DDoS attacks. It can also integrate with services like Amazon Route 53 and Amazon CloudFront.

AWS also offers the following security services:

- AWS WAF (Web Application Firewall), which uses machine-learning capabilities to filter incoming traffic from bad actors.

- AWS Key Management Service (AWS KMS) which uses cryptographic keys to perform encryption operations for encrypting and decrypting data.

- Amazon Inspector, which runs automated security assessments on your infrastructure based on compliance baselines.

- Amazon Guard Duty, which provides threat intelligence by monitoring network activity and account behaviour.

And that’s all for today! Hope you enjoyed this post, join me again next time for more AWS Core Concepts! And more importantly, go and enroll for the course using the links at the top of the post – this is my brief summary and understanding of the Modules, but the course if well worth taking if you want to get more in-depth.