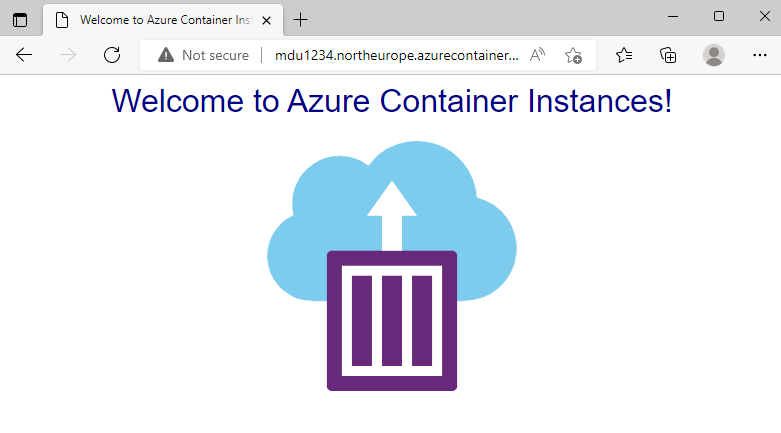

Its Day 85 of my 100 Days of Cloud journey, and in todays post I’m looking at the options for Container Security in Azure.

We looked at an overview of Containers on Day 81, how they work like a virtual machines in that they utilize the underlying resources offered by the Container Host, but instead of packaging your code with an Operating System, each container only contains the code and dependencies needed to run the application and runs as a process inside the OS Kernel. This means that containers are smaller and more portable, and much faster to deploy and run.

We need to secure Containers in the same way as we would any other services running on the Public Cloud. Lets take a look at the different options that are available to us for securing Containers.

Use a Private registry

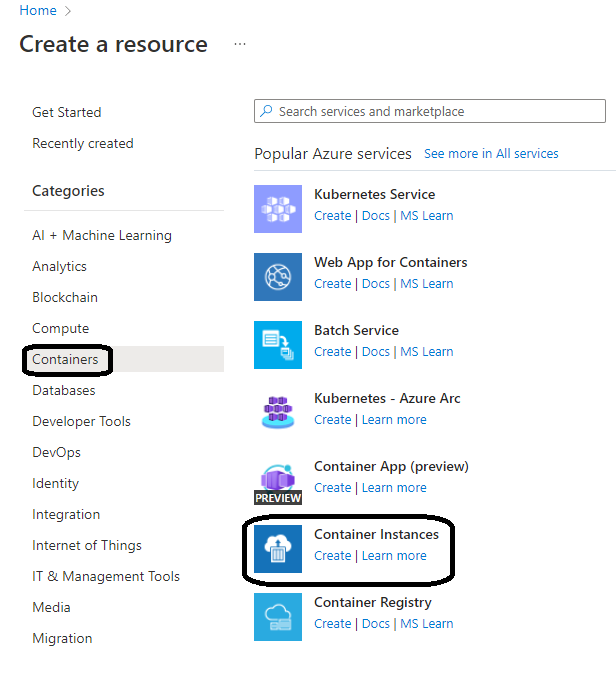

Containers are built from images that are stored in either public repositories such as Docker Hub, a private registry such as Docker Trusted Registry, which can be installed on-premises or in a virtual private cloud, or a cloud-based private registry such as Azure Container Registry.

Like all software that is publicly available on the internet, a publicly available container image does not guarantee security. Container images consist of multiple software layers, and each software layer might have vulnerabilities.

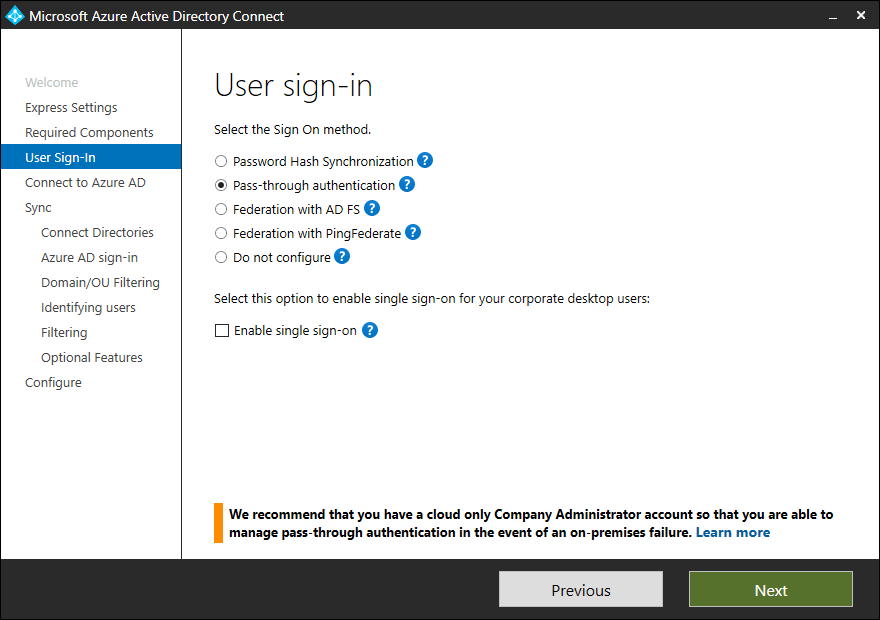

To help reduce the threat of attacks, you should store and retrieve images from a private registry, such as Azure Container Registry or Docker Trusted Registry. In addition to providing a managed private registry, Azure Container Registry supports service principal-based authentication through Azure Active Directory for basic authentication flows. This authentication includes role-based access for read-only (pull), write (push), and other permissions.

Ensure that only approved images are used in your environment

Allow only approved container images. Have tools and processes in place to monitor for and prevent the use of unapproved container images. One option is to control the flow of container images into your development environment. For example, you only allow a single approved Linux distribution as a base image in order to minimize the surface for potential attacks.

Another option is to utilize Azure Container Registry support for Docker’s content trust model, which allows image publishers to sign images that are pushed to a registry, and image consumers to pull only signed images.

Monitoring and Scanning Images

Use solutions that have the ability to scan container images in a private registry and identify potential vulnerabilities. Azure Container Registry optionally integrates with Microsoft Defender for Cloud to automatically scan all Linux images pushed to a registry to detect image vulnerabilities, classify them, and provide remediation guidance.

Credentials

Credential management is one of the most basic tyes of security. Because containers can spread across several clusters and Azure regions, you need to ensure that you have secure credentials required for logins or API access, such as passwords or tokens.

Using tools such as TLS encryption for secrets data in transit, least-privilege Azure role-based access control (Azure RBAC), and Azure Key Vault to securely store encryption keys and secrets (such as certificates, connection strings, and passwords) for containerized applications.

Removing unneeded privileges from Containers

You can also minimize the potential attack surface by removing any unused or unnecessary processes or privileges from the container runtime. Privileged containers run as root. If a malicious user or workload escapes in a privileged container, the container will then run as root on that system.

Enable Auditing Logging for all Container administrative user access

Use native Azure Solutions to maintain an accurate audit trail of administrative access to your container ecosystem. These logs might be necessary for auditing purposes and will be useful as forensic evidence after any security incident. Azure solutions include:

- Integration of Azure Kubernetes Service with Microsoft Defender for Cloud to monitor the security configuration of the cluster environment and generate security recommendations

- Azure Container Monitoring solution

- Resource logs for Azure Container Instances and Azure Container Registry

Conclusion

So thats a brief overview of how we can secure containers running in Azure and ensure that we are only using approved images that have been scanned for vulnerabilities.

Hope you enjoyed this post, until next time!