Its Day 69 of my 100 Days of Cloud journey, and today I’m getting my head around Azure Logic Apps.

Azure Logic Apps is a cloud-based platform for creating and running automated workflows that integrate your apps, data, services, and systems.

Comparison with Azure Functions

If this sounds vaguely familiar, it should because all the way back on Day 55 we looked at Azure Functions, which by definition allows you to create serverless applications in Azure.

So they both do the same thing, right? Well yes and no. So at this stage it important to show what the differences are between them.

Lets start with Azure Functions:

- Lets you run event-triggered code without having to explicitly provision or manage infrastructure.

- Azure Functions have a “Code-First” (imperative) for user experience and are primarily authored via Visual Studio or another IDE.

- Azure Functions have about a dozen built-in binding types (mainly for other Azure services). If there isn’t an existing binding, you will need to write custom code to create new bindings.

- With Azure Functions, you have 3 pricing options. You can opt for an App Service Plan, which gives you dedicated resources. The second option is completely serverless, with the Consumption plan based on resources consumed and number of executions. The third option is Functions Premium, which is a hybrid of both the App Service Plan and Consumption Plan.

- As Azure Functions are code-first, the only options for deployment are Visual Studio, Azure DevOps or FTP.

Now, lets compare that with Azure Logic Apps:

- Azure Logic Apps is a cloud service that helps you schedule, automate, and orchestrate tasks, business processes, and workflows when you need to integrate apps, data, systems, and services across enterprises or organizations.

- Logic Apps have a “Designer-First” (declarative) experience for the user by providing a visual workflow designer accessed via the Azure Portal.

- Logic Apps have a large collection of connectors to Azure Services, SaaS applications (Microsoft and others), FTP and Enterprise Integration Pack for B2B scenarios; along with the ability to build custom connectors if one isn’t available. Examples of connectors are:

- Azure services such as Blob Storage and Service Bus

- Office 365 services such as Outlook, Excel, and SharePoint

- Database servers such as SQL and Oracle

- Enterprise systems such as SAP and IBM MQ

- File shares such as FTP and SFTP

- Logic Apps has a pure pay-per-usage billing model. You pay for each action that gets executed. However, there are different pricing tiers available, more information is available here.

- There are many ways to manage and deploy Logic Apps. They can be created and updated directly in the Azure Portal (which will automatically create a JSON template). The JSON template can also be deployed via Azure DevOps and Visual Studio.

The following list describes just a few example tasks, business processes, and workloads that you can automate using the Azure Logic Apps service:

- Schedule and send email notifications using Office 365 when a specific event happens, for example, a new file is uploaded.

- Route and process customer orders across on-premises systems and cloud services.

- Move uploaded files from an SFTP or FTP server to Azure Storage.

- Monitor tweets, analyze the sentiment, and create alerts or tasks for items that need review.

Concepts

These are the key concepts to be aware of:

- Logic app – A logic app is the Azure resource you create when you want to develop a workflow. There are multiple logic app resource types that run in different environments.

- Workflow – A workflow is a series of steps that defines a task or process. Each workflow starts with a single trigger, after which you must add one or more actions.

- Trigger – A trigger is always the first step in any workflow and specifies the condition for running any further steps in that workflow. For example, a trigger event might be getting an email in your inbox or detecting a new file in a storage account.

- Action – An action is each step in a workflow after the trigger. Every action runs some operation in a workflow.

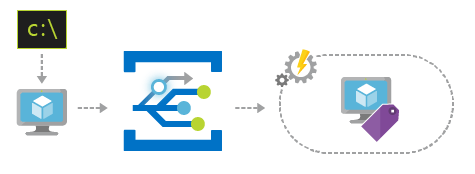

How Logic Apps work

The workflow begins with a trigger, which can have a pull or push pattern. Pull triggers are initiated when a regularly scheduled process finds new updates in the source data since its last pull, while push triggers are initiated each time new data is generated in the source itself.

Next, users define a series of actions that run either consecutively or concurrently, based on the specified trigger and schedule. Users can export the worklow to JSON and use this to create and deploy Logic Apps using tools like Visual Studio and Azure DevOps, or they can save logic apps as Azure Resource Manager templates to reuse.

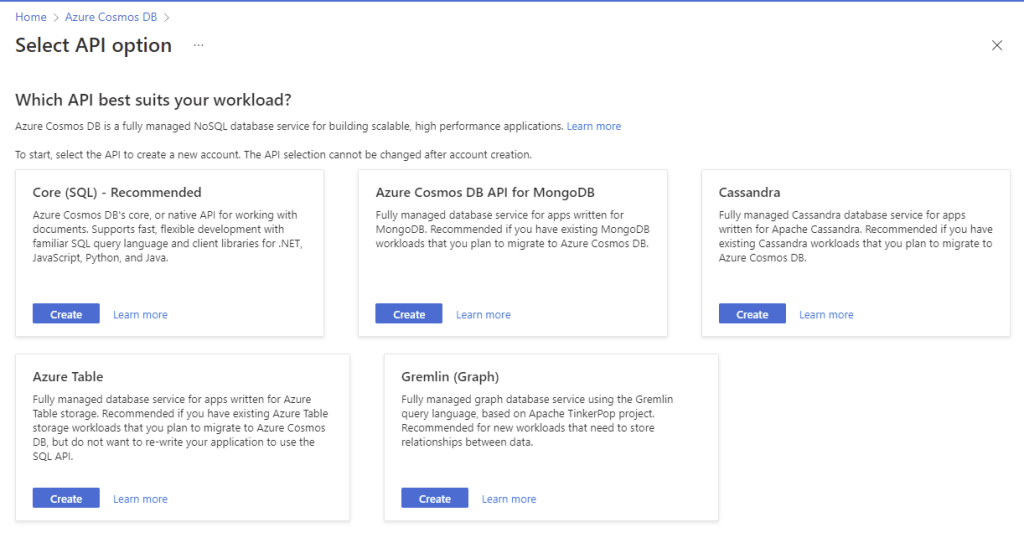

Connectors

Connectors are the most powerful aspect of the structure of a Logic App. Connectors are blocks of pre-built operations that communicate with 3rd-party services as steps in the workflow. Connectors can be nested within each other to provide complex solutions that meet exact use case needs.

Azure contains a catalog of hundreds of available connectors and users can leverage these connectors to accomplish tasks without requiring any coding experience. You can find the full list of connectors here.

Use Cases

The following are the common use cases for Logic Apps:

- Send an email alert to users based on data being updated in an on-premises database.

- Query a database and send email notifications based on result criteria.

- Communication with external platforms and services.

- Data transformation or ingestion.

- Social media connectivity using built-in API connectors.

- Timer- or content-based routing.

- Create business-to-business (B2B) solutions.

- Access Azure virtual network resources.

We saw in the templates above how we can use an Event Grid resource event as a trigger, the tutorial here gives an excellent run through of creating a Logic App based on an Event Grid resource event and using the O365 Email connector.

Conclusion

So thats an overview of Azure Logic Apps and also how it compares to Azure Functions. You can find out more about Azure Logic Apps in the official documentation here.

Hope you enjoyed this post, until next time!