Its Day 25 of my 100 Days of Cloud journey, and todays post is about Azure Storage.

Azure Storage comes in a variety of Storage Types and Redundancy models. This post will provide a brief overview of these services, and I’ll attempt to give an understanding of what Storage Types you should be using for the different technologies in your Cloud Platforms.

Core Storage Services

Azure Storage offers five core services: Blobs, Files, Queues, Tables, and Disks. Let’s explore each and establish some common use cases.

Azure Blobs

This is Azure Storage for Binary Large Objects. This is used for unstructured data such as logs, images, audio, and video. Typical scenarios for using Azure Blob Storage is:

- Web Hosting Data

- Streaming Audio and Video

- Log Files

- Backup Data

- Data Analytics

You can access Azure Blob Storage using tools like Azure Import/Export, PowerShell Modules, Application connections, and the Azure Storage Rest API.

Blob Storage can also be directly accessed over HTTPS using https://<storage-account>.blob.core.windows.net

Azure Files

Azure Files is effectively a traditional SMB File Server in the Cloud. Think of it like a Network Attached Storage (NAS) Device hosted in the Cloud that is highly available from anywhere in the world.

Shares from Azure Files can be mounted directly on any Windows, Linux or macOS device using the SMB protocol. You can also cache an Azure File Share locally on a Windows Server IN your environment – only locally access files are cached, and these are then written back to the parent Azure Files Share in the Cloud.

Azure Files can also be directly accessed over HTTPS using https://<storage-account>.file.core.windows.net

Azure Queues

Azure Queues are used for asynchronous messaging between application components, which is especially useful when decoupling those components (ex. microservices) while retaining communication between them. Another benefit is that these messages are easily accessible via HTTP and HTTPS.

Azure Tables

Azure Table Storage stores non-relational structured data (NoSQL) in a schema-less way, because there is no schema, its easy to adapt your data structure as your applications requirements change.

Azure Disks

Alongside Blobs, Azure Disks is the most common Azure Storage Type. If you are using Virtual Machines, then you are most likely using Azure Disks.

Storage Tiers

Azure offers 3 Storage Tiers that you can apply to your Storage Accounts. This allows you to store data in Azure in the most Cost Effective manner:

- Hot tier – An online tier optimized for storing data that is accessed or modified frequently. The Hot tier has the highest storage costs, but the lowest access costs.

- Cool tier – An online tier optimized for storing data that is infrequently accessed or modified. Data in the Cool tier should be stored for a minimum of 30 days. The Cool tier has lower storage costs and higher access costs compared to the Hot tier.

- Archive tier – An offline tier optimized for storing data that is rarely accessed, and that has flexible latency requirements, on the order of hours. Data in the Archive tier should be stored for a minimum of 180 days.

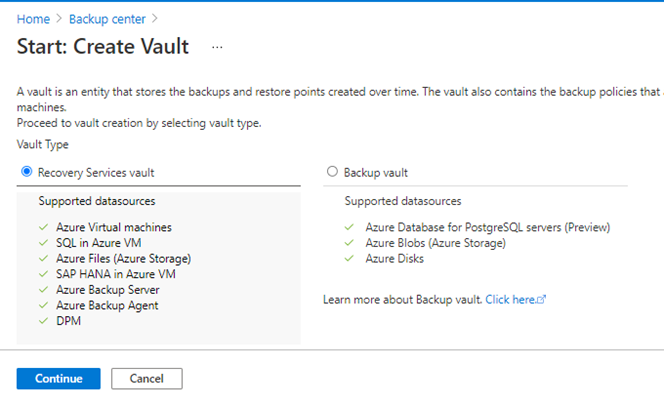

Storage Account Types

For all the above Core Storage Services that I’ve listed, you will more than likely use a Standard General-Purpose v2 Storage Account as this is the standard storage account type for all of the above scenarios. There are however 2 others you need to be aware of:

- Premium block blobs – used in scenarios with high transactions rates, or scenarios that use smaller objects or require consistently low storage latency

- Premium file shares – Used in Azure Files where you require both SMB and NFS capabilities. The default Storage Account only provides SMB.

Storage Redundancy

Finally, lets look at Storage Redundancy. There are 4 options:

- LRS (Locally-redundant Storage) – this creates 3 copies of your data in a single Data Centre location. This is the least expensive option but is not recommended for high availability. LRS is supported for all Storage Account Types.

Credit – Microsoft

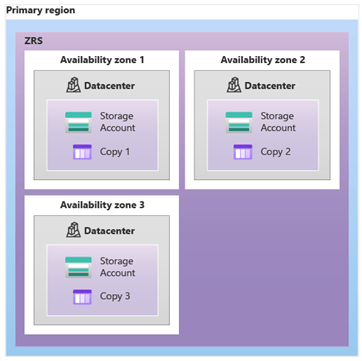

- ZRS (Zone-redundant Storage) – this creates 3 copies of your data across 3 different Availability Zones (or physically distanced locations) in your region. ZRS is supported for all Storage Account Types.

Credit – Microsoft

- GRS (Geo-redundant Storage) – this creates 3 copies of your data in a single Data Centre in your region (using LRS). It then replicates the data to a secondary location in a different region and performs another LRS copy in that location. GRS is supported for Standard General-Purpose v2 Storage Accounts only.

Credit – Microsoft

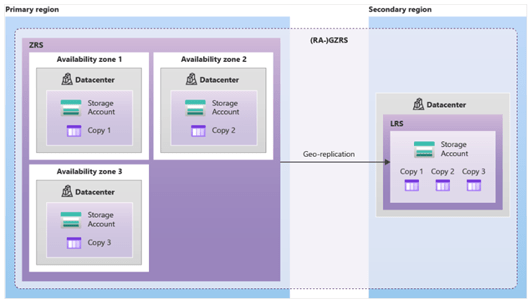

- GZRS (Geo-zone-redundant Storage) – this creates 3 copies of your data across 3 different Availability Zones (or physically distanced locations) in your region. It then replicates the data to a secondary location in a different region and performs another LRS copy in that location. GZRS is supported for Standard General-Purpose v2 Storage Accounts only.

Credit – Microsoft

Conclusion

As you can see, Storage Accounts are not just about storing files – there are several considerations you need to make around cost, tiers and redundancy when planning Storage for your Azure Application or Service.

Hope you enjoyed this post, until next time!